What is a service mesh?

Learn about the challenges that a service mesh solves.

A service mesh is a dedicated infrastructure layer that you add your apps to. The service mesh provides central management for your cloud-native microservices architecture. This way, you can optimize your apps in areas like communication, routing, reliability, security, and observability.

What are the challenges in a microservice architecture?

Think of microservices in comparison with another style of application, the monolithic app. Monolithic apps typically have all the business logic, including ingress rules, built in. When you move from monolithic to a microservices architecture, you end up with many smaller services. Each of these “micro” services performs a standalone function of your app. Depending on how big your monolithic app was, the number of microservices can be very high, sometimes in the hundreds or thousands. Monolithic applications solved the problem of how traffic enters the app (north-south traffic). In contrast, microservices also compose or re-use functionality across each other (east-west traffic).

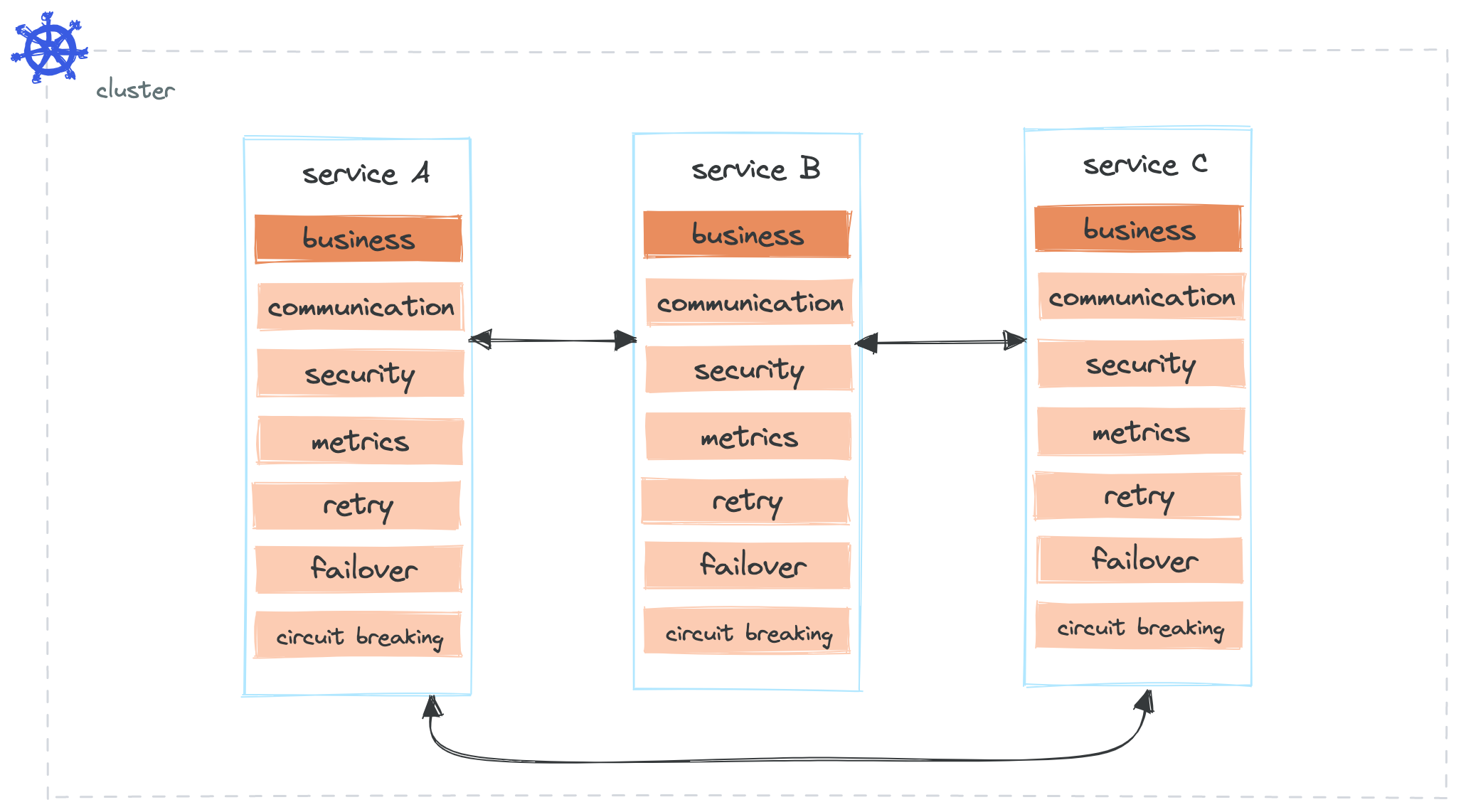

Without a service mesh, each microservice needs not only the business logic, but also connectivity logic. Connectivity challenges include how to discover and connect to other microservices, how to exchange and secure data, and how to monitor network activity. Additionally, microservices change quickly without a full redeployment. They often move dynamically within a Kubernetes cluster. Your app might even spread across multiple clusters, availability zones, or regions for resiliency.

As such, some of the biggest challenges in a microservices architecture include the following:

- Keeping track of the changes across apps, especially as the number of microservices grows.

- Implementing security and compliance standards across all services and clusters.

- Controlling network traffic with consistent policies.

- Troubleshooting network errors.

Let’s take a look at a microservices architecture without a service mesh. Besides implementing the business logic, every microservice must also make sure that it can communicate with other microservices; secure the communication; apply retry, failover and over policies; manipulate headers depending on which microservice the request is sent to; capture metrics and logs; and most importantly keep track of changes in the microservices architecture. While you can manage these tasks across a few microservices, the complexity grows as you add more microservices to your ecosystem.

How does a service mesh solve these challenges?

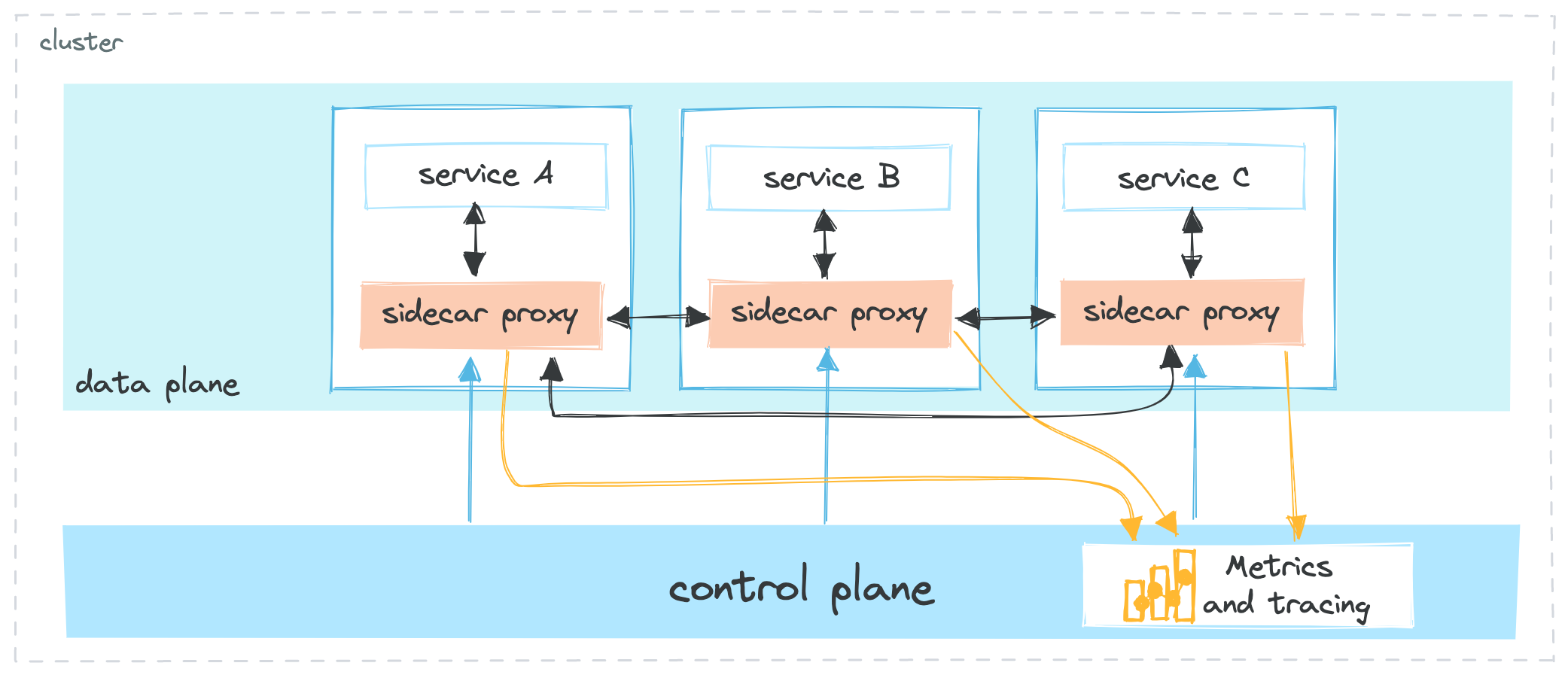

A service mesh does not implementing communication, security, metrics, and other layers for every single microservice. To abstract service-to-service communication, a service mesh uses proxies. Proxies, also referred to as sidecars, are deployed alongside your microservice. For example, the proxy can be a container in the same Kubernetes pod as your app. The proxies form the data plane of the service mesh. By intercepting every communication between the microservices, you can control traffic. For example, you might creates rules to forward, secure, or manipulate requests.

The configuration for each sidecar proxy includes traffic encryption, certificates, and routing rules. The service mesh specifies and applies the proxy configuration across microservices. This way, you get consistent networking, security, and compliance across your microservices architecture. All proxies send back network traffic metrics to the control plane that you can access with an API. Then, you can determine the health of your service mesh.

What are the key benefits of a service mesh?

Review the key benefits of moving your microservices architecture to a service mesh.

- Separate business and networking logic: With a service mesh, developers can concentrate on driving strategic business goals rather than spending time on implementing custom communication patterns, managing certificates, or setting up network resiliency. Instead, the service mesh operator or platform admin can implement the networking rules for the service mesh, such as how network traffic is secured, how services are discovered, or what traffic management policies are applied to incoming requests before they are forwarded within the mesh.

- Set policies once and apply them wherever you need: By using the service mesh control plane, you can set the networking rules once, and automatically apply these rules across all sidecar proxies in your service mesh. New microservices automatically inherit these settings and are able to communicate with all services in the mesh through the sidecar proxy.

- Ensure high availability and fault tolerance: With traffic policies, such as retries, failover policies, or fault injections, you can harden your microservices architecture and increase app reliability and resiliency. Because all communication between services is intercepted by the proxy, you can manipulate how microservices communicate with each other, and secure network traffic between them.

- Easily scale your microservices architecture: As the number of your microservices grows, a service mesh can help to manage the increasing networking, routing, and security complexity while minimizing the amount of code that needs to be integrated into each microservice. This allows you to easily scale your microservices architecture and to apply consistent security and compliance standards to all your apps.

- Monitor the service mesh health and performance: By analyzing the data that the proxies send back to the control plane, you gain insight into failure times, or how long it took before a retry succeeded. You can use these measures to optimize traffic policies and ensure network resiliency.