Benefits

Learn about the benefits of Gloo Mesh Enterprise and how you can use it in your single and multicluster setup.

With Gloo Mesh Enterprise, you get an extensible, open-source based set of API tools to connect and manage your services across multiple clusters and service meshes.

Have more questions about how Gloo Mesh simplifies application networking concepts in Kubernetes and Istio? Review the YouTube playlist of 1-2 minute long video answers to frequently asked questions about Gloo Mesh, Istio, and other topics about securing application networking traffic. For more information, see the Resource Library page on the Solo.io website.

Use Gloo Mesh as a complete service mesh and API gateway management solution

With an increasingly distributed environment for your apps, you need a flexible, open-source based solution to help meet your traditional and new IT requirements. Solo is laser-focused on developing the best service mesh and API gateway solutions, unlike other offerings that might be a small part of a vendor-locked in solution. Furthermore, Gloo Mesh Enterprise is built with the following six principles.

- Secure: You need a zero-trust model and end-to-end controls to implement best practices, comply with strict regulations like FIPS, and reduce the risk of running older versions with security patching.

- Reliable: You need a robust, enterprise-grade tool with features like priority failover and locality-aware load balancing to manage your service mesh and API gateway for your mission-critical workloads.

- Unified: You need one centralized tool to manage and observe your application environments and traffic policies at scale.

- Simplified: Your developers need a simple, declarative, API-based method to provide services to your apps without further coding and without needing to understand the complex technologies like Istio and Kubernetes that underlie your environments.

- Comprehensive: You need a complete solution for north-south ingress and east-west service traffic management across infrastructure resources on-premises and across clouds.

- Modern and open: You need a solution that is designed from the ground up on open-source, cloud-native best practices and leading projects like Kubernetes and Istio to maximize the portability and scalability of your development processes.

See how Gloo Mesh is an Outperformer and Leader in the service mesh space in the following GigaOM Radar report.

Use Gloo Mesh Enterprise for production-ready support

Hardening and managing open source distributions is time-consuming and costly. Your engineering resources can be better invested in developing higher-value services that enhance your core business offerings. In the following table, review the benefits of using an enterprise license instead of open source. Then, continue reading for the benefits of using Gloo Mesh Enterprise in single and multiple clusters.

Looking for a full list of features compared against what’s available in open source? See the Comparison matrix on the product website.

| Benefit | Gloo Mesh Enterprise | Community Istio |

|---|---|---|

| Upstream-first approach to feature development | ✅ | ✅ |

| Installation, upgrade, and management across clusters and service meshes | ✅ | ❌ |

| Advanced features for security, traffic routing, tranformations, observability, and more | ✅ | ❌ |

End-to-end Istio support and CVE security patching for n-4 versions | ✅ | ❌ |

| Specialty builds for distroless and FIPS compliance | ✅ | ❌ |

| 24x7 production support and one-hour Severity 1 SLA | ✅ | ❌ |

| GraphQL and Portal modules to extend functionality | ✅ | ❌ |

| Workspaces for simplified multitenancy | ✅ | ❌ |

Single cluster benefits

With the move to containerized applications, you might find yourself managing a Kubernetes or OpenShift cluster with hundreds of namespaces that each contain several microservices that different development teams are responsible for deploying. You can use Gloo Mesh to manage your application networking across namespaces.

Simplify service mesh management

The Gloo Mesh API simplifies the complexity of your service mesh by installing custom resource definitions (CRDs) that you configure. Then, Gloo Mesh translates these CRDs into Istio resources across your environment, and provides visibility across all of the resources and traffic. Enterprise features include multitenancy, global failover and routing, observability, and east-west rate limiting and policy enforcement through authorization and authentication plug-ins.

Using the Gloo Mesh API helps you simplify your networking setup because you can write advanced configurations one time and apply the same configuration in multiple places and different contexts. For example, you can write a rate limit or authentication policy once. Then, you can apply this policy both to traffic that enters the cluster via Gloo Mesh Gateway (north-south), as well as to traffic across clusters via the Istio service mesh gateway (east-west).

This API- and CRD-based approach can be integrated into your continuous integration, continuous development (CI/CD) DevOps and GitOps workflows, so that you can track changes, control promotions, roll back when needed, and otherwise automate your processes.

Organize all your team’s resources with workspaces

Gloo introduces a new concept for Kubernetes-based multitenancy, the Workspace custom resource. A workspace consists of one or more Kubernetes namespaces that are in one or more clusters. Think of a workspace as the boundary of your team’s resources. To get started, you can create a workspace for each of your teams. Your teams might start with their apps in a couple Kubernetes namespaces in a single cluster. As your teams scale across namespaces and clusters, their workspaces scale with them.

For example, you might have a cluster to run a website. Currently, you have a bunch of namespaces for the backend and frontend teams. Although the apps in these namespaces need to talk to each other, you don’t want the teams to have access to the other namespaces. In fact, you don’t even want all the frontend developers to have access to all the frontend namespaces! You also don’t need either team to access the gateway that runs in your cluster.

Gloo Mesh can help you by organizing your namespaces into Workspaces. You can share resources across workspaces by configuring the workspace settings to import and export. To prevent unintentional sharing, both workspace settings must have a matching import-export setup. You can even select particular resources to import or export, such as with Kubernetes labels, names, or namespaces. Your developers do not have to worry about exposing their apps. They deploy the app in their own namespace, and refer to the services they need to use, even if the service is in another namespace or workspace. As long as the appropriate Gloo Mesh CRs are in an imported workspace, Gloo Mesh takes care of the rest.

The following figure presents an example single cluster scenario. As you can see, your app teams configure the Kubernetes resources in their respective namespaces. Your service mesh admin sets up the Gloo Mesh workspaces to import and export as needed. Then, the admin configures a few custom resources, and Gloo Mesh does the rest. Other Gloo Mesh and Istio custom resources are not only created for you, but also exposed to other workspaces that need them.

Multitenancy for easier, safer onboarding

Gloo Mesh workspaces let you delegate management and policy decisions to your teams. Your platform administrator can create the workspaces and then delegate how to set up the workspace settings to a workspace admin. The workspace admin is your team lead, such as an app owner. Then, the workspace admin sets up the appropriate namespaces and import/export settings with other workspaces. Afterwards, the rest of your teams access only the namespaces in the clusters that they need, as shown in the following figure. This delegation helps you onboard new teams and apps.

Besides onboarding, Gloo Mesh helps you operate your service mesh consistently. The v2 API streamlines the possible inputs and outputs of the Gloo Mesh custom resources. It also simplifies the mapping between Gloo Mesh and Istio resources. These improvements from the v1 API give you the following operational benefits.

- Consistent user experience when configuring resources.

- Enhanced learnability of the API.

- Quicker troubleshooting to identify affected inputs and outputs.

Harden your service mesh lifecycle with n-4 Istio support

The Solo distribution of Istio is a hardened Istio enterprise image, which maintains n-4 support for CVEs and other security fixes. The image support timeline is longer than the community Istio support timeline, which provides n-1 support with an additional 6 weeks of extended time to upgrade the n-2 version to n-1. Based on a cadence of 1 release every 3 months, Gloo Mesh’s n-4 support provides an extra 9 months to run the hardened Istio version of your choice, compared to an open source strategy that also lacks enterprise support. Note that all backported functionality is available in the upstream community Istio, as there are no forked capabilities from community Istio.

For more information, see Version support.

Set up a zero-trust architecture for both north-south ingress and east-west service traffic

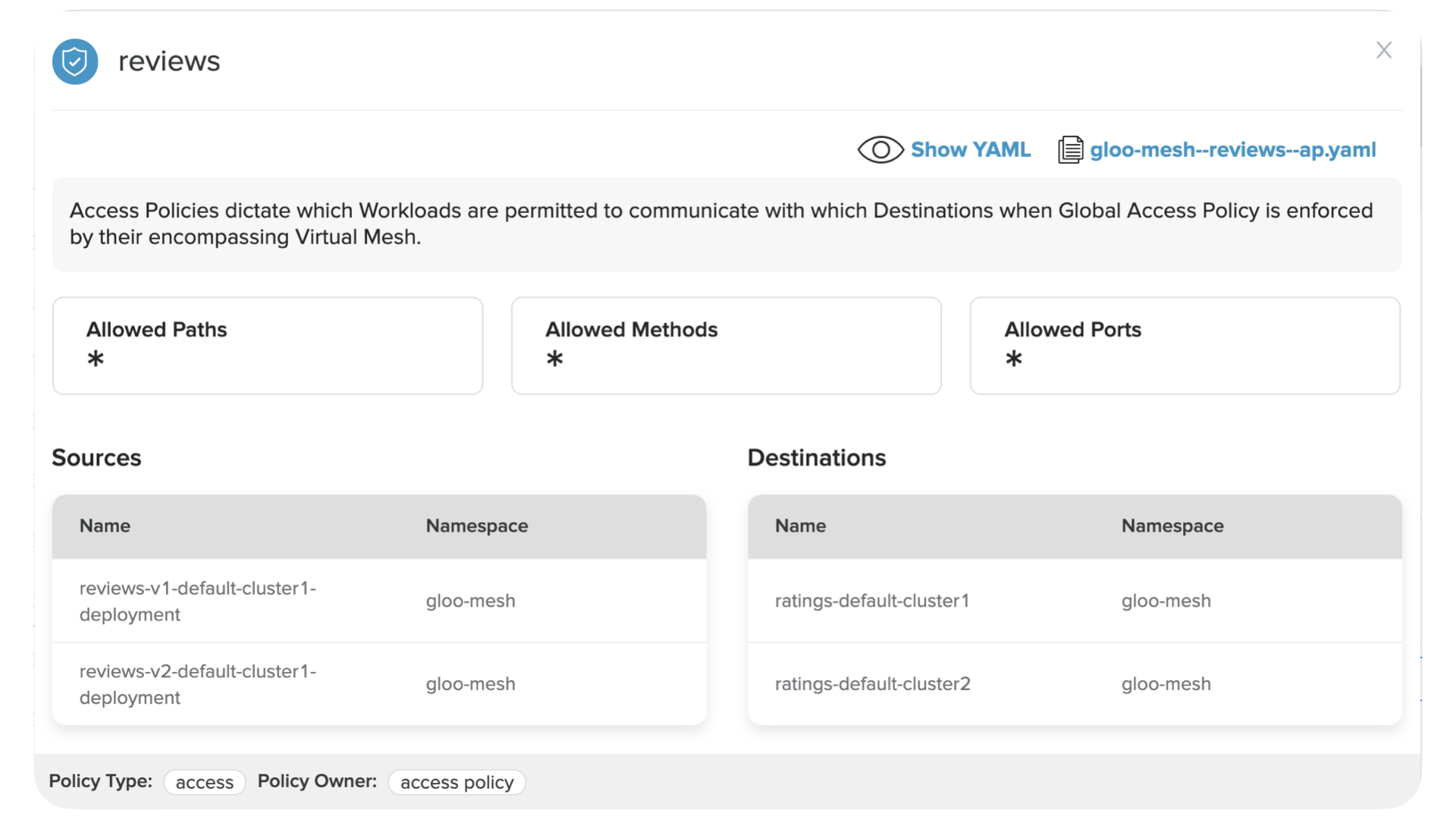

You can set up a zero-trust architecture where trust is established by the attributes of the request, requestor, or environment. By default, no communication is allowed. Use access policies to control which services can communicate across or outside the service mesh.

Then, you can review which running services match the criteria in your policies and can communicate, such as in the following figure. Additionally, you can implement other security measures to control authorization and authentication (AuthZ/AuthN), rate limit, and otherwise shape traffic that enters or travels across your cluster. For more information, see the security guides.

Multicluster benefits

Similar to a containerized microservices architecture, modern infrastructure architectures favor a distributed environment of many smaller deployment targets, like multiple clusters, for reasons such as the following.

- Fault tolerance

- Compliance and data access

- Disaster recovery

- Scaling needs

- Geographic needs

In addition to all of the benefits you get with single clusters, Gloo Mesh Enterprise helps solve some of your toughest multicluster challenges as described in the following sections. You can also review the benefits of using a service mesh for multicluster adoption in the following video.

Scale clusters with quick registration

As you scale out your apps, you might need to run your apps on-premises as close to the data as possible. You might also need burstable support for surges through a public cloud. As such hybrid environments become increasingly common, you need a service mesh approach that can scale with your workloads. Gloo Mesh simplifies this process by providing tools to register clusters and create virtual meshes that make sense for your workload architecture.

For more information, see Registering workload clusters.

Discover multicluster Istio services

A challenging Istio problem is about discovery of cross-cluster communication.

Istio Endpoint Discovery Service (EDS) requires each Istio control plane to have access to the Kubernetes API server of each cluster, as shown in the following figure. Besides the security concerns with this approach, an Istio control plane cannot start if it is not able to contact one of the clusters, which impacts performance.

Gloo Mesh addresses these problems with its server-agent approach, as shown in the following figure. An agent that runs on each cluster watches the local Kubernetes API server and passes the information to the Gloo Mesh management plane through a secured gRPC channel. Gloo Mesh then tells the agents to create the Istio ServiceEntries that correspond to the workloads that are discovered on the other clusters.

For more information, see Relay architecture.

Set up multitenancy across clusters

Building on the single cluster scenario, workspaces extend to multicluster scenarios. A workspace can include namespaces from one or more clusters. You can also import a workspace that was set up in a different cluster, and select the workspace’s resources based on the namespace, labels, names, or other fields. Don’t worry about logging into multiple clusters to manage your workspaces. Instead, you can create your resources anywhere, and use the workspace settings to manage sharing.

The following figure shows a multicluster version of the previous single cluster figure. As you can see, you don’t have to do much to scale from a single cluster to many clusters. Make sure to name the namespaces so that they match your workspace settings. Gloo Mesh automatically identifies the namespaces and starts managing them via the workspace.

Resources that you labeled for export are automatically created for you. For example, you might have route tables or virtual destinations in a workspace in one cluster. After setting up the import and export settings, you can export these resources to other workspaces. Then, your services in that workspace can respond to requests that are received in another cluster and workspace.

Set up traffic failover across clusters and environments

You have a multicluster hybrid environment across different zones and regions. But how do you configure high availability, failover, and traffic routing to the closest, available destination? With Gloo Mesh, you can use API resources to define how services behave, interact, and receive traffic.

For example, virtual destinations automatically send traffic based on the nearest, healthy backing service. With policies, you can configure reusable rules for security, resilience, observability, and other traffic control.

Observe your service mesh traffic across environments

If you set up the Gloo OpenTelemetry pipeline during the Gloo Mesh Enterprise installation, metrics for your workloads are automatically collected and provided to the Gloo UI. You can use these metrics to observe all your service traffic more easily through an interactive UI, as shown in the following example clip. For more information, see Observability.