About CNIs

Learn about what a Container Network Interface (CNI) is and how it is used in a cluster.

A Container Network Interface (CNI) is a Cloud Native Computing Foundation (CNCF) project that provides specifications and libraries to write plug-ins that configure networking resources for Linux containers. The CNI manages the network connectivity between containers, and adds or removes resources, such as IP addresses, when containers are created or deleted.

How is a CNI used in Kubernetes?

Kubernetes uses a CNI to implement the Kubernetes network model, and to create and manage the network interface for pods. Without a CNI, no pod-to-pod and node-to-pod connectivity can be established because no IP address can be assigned to the pod or the node. As a consequence, pods cannot be scheduled in the cluster and remain pending.

When you add a CNI to your Kubernetes cluster, the CNI defines how network connectivity for a pod is established and the network controls that you can implement. Depending on the CNI plug-in that you use, an overlay or underlay network is created in your cluster. Overlay networks encapsulate network traffic with a virtual interface such as Virtual Extensible LAN (VXLAN). Underlay networks work at the physical layer, such as switches and routers.

If a pod is created in the cluster, Kubernetes calls the CNI plug-in and asks for the pod to be included in the cluster network (ADD operation). The CNI works with other plug-ins, such as the IP Address Management (IPAM) plug-in, to set up network connectivity for the pod. For example, the IPAM plug-in is used to allocate a static IP address to the pod. The result is then returned to the Kubernetes runtime.

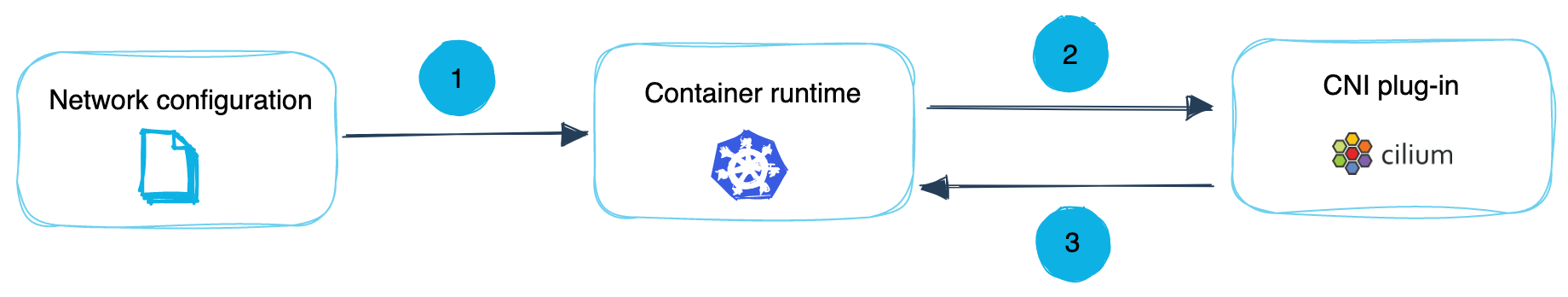

The following image summarizes how the container runtime and the CNI communicate with each other:

- You provide the CNI network configuration to the container runtime. The network configuration is specific to the CNI that you use, and tells the container runtime how to interact with the CNI plug-in. For example, the configuration defines what network plug-ins to call, in what order they need to be called, and the procedure that network plug-ins must follow when functionality must be delegated to other plug-ins. In addition, the network configuration defines the protocol that must be used when a request to a network plug-in is sent, the request parameters that must be provided, and the structure of the data that is returned by the network plug-in.

- When a network operation must be performed, such as when a container or pod must be connected or removed from the cluster network, the container runtime sends the equivalent request to the CNI plug-in. For example, in order to add a Kubernetes pod to the cluster network, the

ADDoperation is performed. The container runtime follows the procedure that is defined in the network configuration and starts to call each network plug-in, one by one, using the outlined protocol and request parameters. - The CNI plug-in performs the operation and returns a response to the container runtime. The format of the response is defined in the network configuration that you provided to the container runtime. For example, a response could include the IP address that was assigned or the route that the plug-in configured.

Overview of supported CNI operations

Every CNI plug-in must implement the following network operations:

ADD: Create a network interface for a container or pod. This operation is used to add a container or pod to the cluster network.DELETE: Remove the network interface of a container or pod. This operation is used when a container or pod is deleted.CHECK: This operation is used to retrieve information about the network interface of a container or pod.VERSION: This operation is used to check the network plug-in versions that the CNI plug-in supports.

Overview of plug-ins that a CNI can use

A CNI can leverage network plug-ins from the following plug-in groups to set up the network interface for a container or pod.

- Interface: Interface plug-ins create a connection from the container or pod in the container namespace to the virtual or physical interface in the root namespace of the Linux host.

- IP Address Management (IPAM): Plug-ins from this group manage the pool of IP addresses that are assigned to a container or pod.

- Meta: Meta plug-ins use the network interface that was created by the interface plug-ins and perform additional network configurations inside the container, pod, or host.

For more information about the plug-ins that belong to each plug-in group, see the CNI documentation.