Multicluster

Deploy Gloo Mesh Core across multiple clusters to gain valuable insights into your Istio service meshes.

Gloo Mesh Core deploys alongside your Istio installations in single or multicluster environments, and gives you instant insights into your Istio environment through a custom dashboard.

You can follow this guide to quickly get started with Gloo Mesh Core. To learn more about the benefits and architecture, see About. To customize your installation with Helm instead, see the advanced installation guide.

Before you begin

Install the following command-line (CLI) tools.

kubectl, the Kubernetes command line tool. Download thekubectlversion that is within one minor version of the Kubernetes clusters you plan to use.meshctl, the Solo command line tool.curl -sL https://run.solo.io/meshctl/install | GLOO_MESH_VERSION=v2.6.5 sh - export PATH=$HOME/.gloo-mesh/bin:$PATH

Create or use at least two existing Kubernetes clusters. The instructions in this guide assume one management cluster and two workload clusters.

- The cluster name must be alphanumeric with no special characters except a hyphen (-), lowercase, and begin with a letter (not a number).

Set the names of your clusters from your infrastructure provider. If your clusters have different names, specify those names instead.

export MGMT_CLUSTER=mgmt export REMOTE_CLUSTER1=cluster1 export REMOTE_CLUSTER2=cluster2- Save the kubeconfig contexts for your clusters. Run

kubectl config get-contexts, look for your cluster in theCLUSTERcolumn, and get the context name in theNAMEcolumn. Note: Do not use context names with underscores. The generated certificate that connects workload clusters to the management cluster uses the context name as a SAN specification, and underscores in SAN are not FQDN compliant. You can rename a context by runningkubectl config rename-context "<oldcontext>" <newcontext>.export MGMT_CONTEXT=<management-cluster-context> export REMOTE_CONTEXT1=<remote-cluster1-context> export REMOTE_CONTEXT2=<remote-cluster2-context> Set your Gloo Mesh Core license key as an environment variable. If you do not have one, contact an account representative. If you prefer to specify license keys in a secret instead, see Licensing. To check your license’s validity, you can run

meshctl license check --key $(echo ${GLOO_MESH_CORE_LICENSE_KEY} | base64 -w0).export GLOO_MESH_CORE_LICENSE_KEY=<license_key>

Install Gloo Mesh Core

In a multicluster setup, you deploy the Gloo management plane into a dedicated management cluster, and the Gloo data plane into one or more workload clusters that run Istio service meshes.

Management plane

Deploy the Gloo management plane into a dedicated management cluster.

Install Gloo Mesh Core in your management cluster. This command uses a basic profile to create a

gloo-meshnamespace and install the Gloo management plane components, such as the management server and Prometheus server, in your management cluster. For more information, check out the CLI install profiles.meshctl install --profiles gloo-core-mgmt \ --kubecontext $MGMT_CONTEXT \ --set common.cluster=$MGMT_CLUSTER \ --set licensing.glooMeshCoreLicenseKey=$GLOO_MESH_CORE_LICENSE_KEYVerify that the management plane pods have a status of

Running.kubectl get pods -n gloo-mesh --context $MGMT_CONTEXTExample output:

NAME READY STATUS RESTARTS AGE gloo-mesh-mgmt-server-56c495796b-cx687 1/1 Running 0 30s gloo-mesh-redis-8455d49c86-f8qhw 1/1 Running 0 30s gloo-mesh-ui-65b6b6df5f-bf4vp 3/3 Running 0 30s gloo-telemetry-collector-agent-7rzfb 1/1 Running 0 30s gloo-telemetry-gateway-6547f479d5-r4zm6 1/1 Running 0 30s prometheus-server-57cd8c74d4-2bc7f 2/2 Running 0 30sSave the external address and port that your cloud provider assigned to the Gloo OpenTelemetry (OTel) gateway service. The OTel collector agents in each workload cluster send metrics to this address.

export TELEMETRY_GATEWAY_IP=$(kubectl get svc -n gloo-mesh gloo-telemetry-gateway --context $MGMT_CONTEXT -o jsonpath="{.status.loadBalancer.ingress[0]['hostname','ip']}") export TELEMETRY_GATEWAY_PORT=$(kubectl get svc -n gloo-mesh gloo-telemetry-gateway --context $MGMT_CONTEXT -o jsonpath='{.spec.ports[?(@.name=="otlp")].port}') export TELEMETRY_GATEWAY_ADDRESS=${TELEMETRY_GATEWAY_IP}:${TELEMETRY_GATEWAY_PORT} echo $TELEMETRY_GATEWAY_ADDRESS

Data plane

Register each workload cluster with the Gloo management plane by deploying Gloo data plane components. A deployment named gloo-mesh-agent runs the Gloo agent in each workload cluster.

Register both workload clusters with the management server. These commands use a basic profile to create a

gloo-meshnamespace and install the Gloo data plane components, such as the Gloo agent. For more information, check out the CLI install profiles.meshctl cluster register $REMOTE_CLUSTER1 \ --kubecontext $MGMT_CONTEXT \ --profiles gloo-core-agent \ --remote-context $REMOTE_CONTEXT1 \ --telemetry-server-address $TELEMETRY_GATEWAY_ADDRESS meshctl cluster register $REMOTE_CLUSTER2 \ --kubecontext $MGMT_CONTEXT \ --profiles gloo-core-agent \ --remote-context $REMOTE_CONTEXT2 \ --telemetry-server-address $TELEMETRY_GATEWAY_ADDRESSVerify that the Gloo data plane components in each workload cluster are healthy. If not, try debugging the agent.

meshctl check --kubecontext $REMOTE_CONTEXT1 meshctl check --kubecontext $REMOTE_CONTEXT2Example output:

🟢 Gloo deployment status Namespace | Name | Ready | Status gloo-mesh | gloo-mesh-agent | 1/1 | Healthy gloo-mesh | gloo-telemetry-collector-agent | 3/3 | HealthyVerify that your Gloo Mesh Core setup is correctly installed. If not, try debugging the relay connection. Note that this check might take a few seconds to verify that:

- Your Gloo product licenses are valid and current.

- The Gloo CRDs are installed at the correct version.

- The management plane pods in the management cluster are running and healthy.

- The agents in the workload clusters are successfully identified by the management server.

meshctl check --kubecontext $MGMT_CONTEXTExample output:

🟢 License status INFO gloo-mesh-core enterprise license expiration is 25 Aug 24 10:38 CDT INFO No GraphQL license module found for any product 🟢 CRD version check 🟢 Gloo deployment status Namespace | Name | Ready | Status gloo-mesh | gloo-mesh-mgmt-server | 1/1 | Healthy gloo-mesh | gloo-mesh-redis | 1/1 | Healthy gloo-mesh | gloo-mesh-ui | 1/1 | Healthy gloo-mesh | gloo-telemetry-collector-agent | 3/3 | Healthy gloo-mesh | gloo-telemetry-gateway | 1/1 | Healthy gloo-mesh | prometheus-server | 1/1 | Healthy 🟢 Mgmt server connectivity to workload agents Cluster | Registered | Connected Pod cluster1 | true | gloo-mesh/gloo-mesh-mgmt-server-65bd557b95-v8qq6 cluster2 | true | gloo-mesh/gloo-mesh-mgmt-server-65bd557b95-v8qq6 Connected Pod | Clusters gloo-mesh/gloo-mesh-mgmt-server-65bd557b95-v8qq6 | 2

Deploy Istio

Check whether Istio control planes already exist in the workload clusters.

kubectl get pods -n istio-system --context $REMOTE_CONTEXT1

kubectl get pods -n istio-system --context $REMOTE_CONTEXT2

- If

istiodpods exist in each workload cluster, such as in this example output, you already installed Istio control planes. Continue to the next step.NAME READY STATUS RESTARTS AGE istiod-1-22-b65676555-g2vmr 1/1 Running 0 8d NAME READY STATUS RESTARTS AGE istiod-1-22-7b96cb895-4nzv9 1/1 Running 0 8d - If no

istiodpods exist, you can use Gloo Mesh Core to quickly install and manage service meshes in each workload cluster for you. For more information about service mesh lifecycle management with Gloo, check out Service mesh lifecycle and Solo distributions of Istio.

Deploy a sample app

To analyze your service mesh with Gloo Mesh Core, be sure to include your services in the mesh.

Explore the UI

Use the Gloo UI to evaluate the health and efficiency of your service mesh. You can review the analysis and insights for your service mesh, such as recommendations to harden your Istio environment and steps to implement them in your environment.

Launch the dashboard

Open the Gloo UI. The Gloo UI is served from the

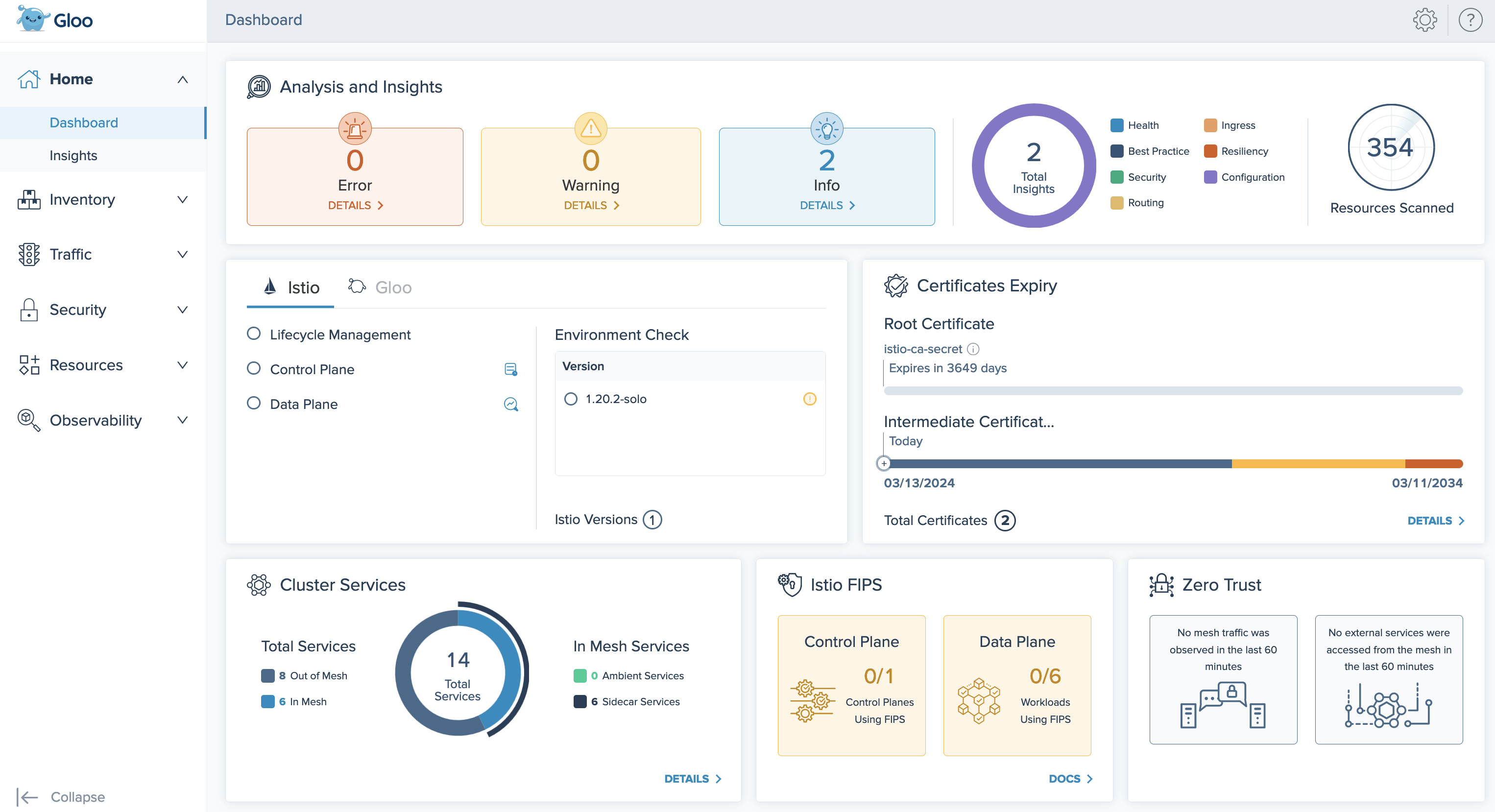

gloo-mesh-uiservice on port 8090. You can connect by using themeshctlorkubectlCLIs.Review your Dashboard for an at-a-glance overview of your Gloo Mesh Core environment. Environment insights, health, status, inventories, security, and more are summarized in the following cards:

- Analysis and Insights: Gloo Mesh Core recommendations for how to improve your Istio setups.

- Gloo and Istio health: A status check of the Gloo Mesh Core and Istio installations in each cluster.

- Certificates Expiry: Validity timelines for your root and intermediate Istio certificates.

- Cluster Services: Inventory of services across all clusters in your Gloo Mesh Core setup, and whether those services are in a service mesh or not.

- Istio FIPS: FIPS compliance checks for the

istiodcontrol planes and Istio data plane workloads. - Zero Trust: Number of service mesh workloads that receive only mutual TLS (mTLS)-encrypted traffic, and number of external services that are accessed from the mesh.

Figure: Gloo UI dashboard

Check insights

Review the insights for your environment. Gloo Mesh Core comes with an insights engine that automatically analyzes your Istio setups for health issues. Then, Gloo shares these issues along with recommendations to harden your Istio setups. The insights give you a checklist to address issues that might otherwise be hard to detect across your environment.

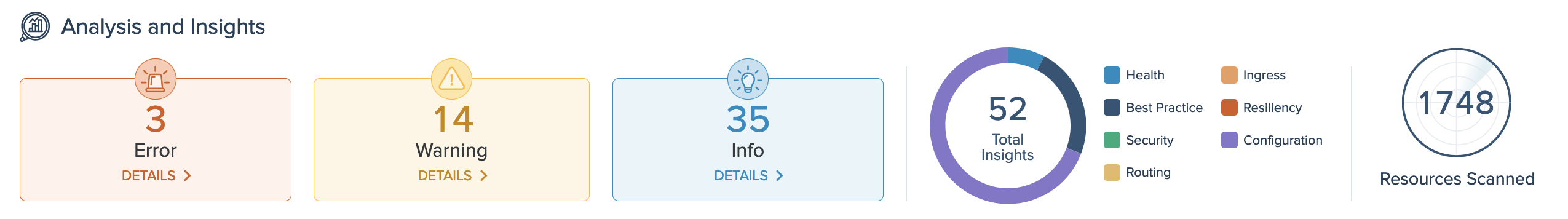

On the Analysis and Insights card of the dashboard, you can quickly see a summary of the insights for your environment, including how many insights are available at each severity level, and the type of insight.

Figure: Insights and analysis card View the list of insights by clicking the Details button, or go to the Insights page.

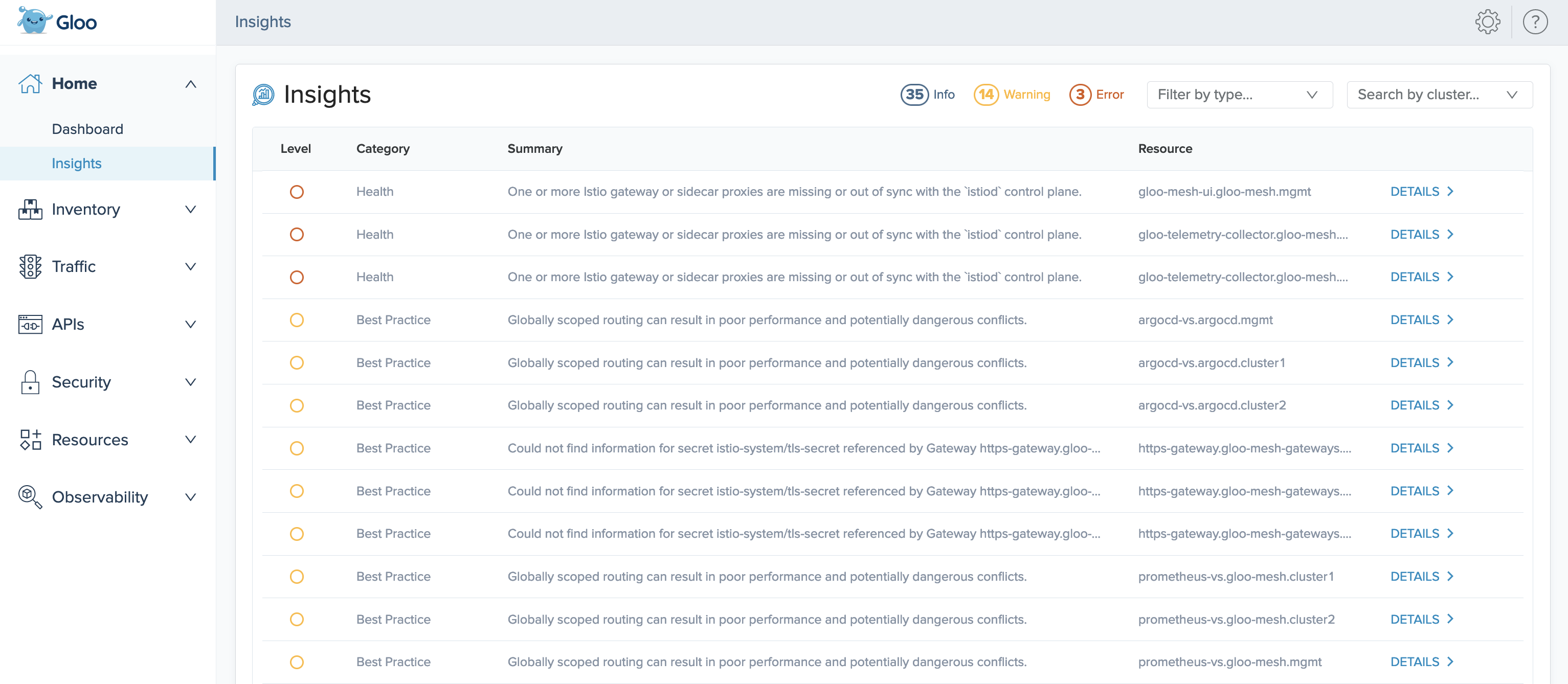

On the Insights page, you can view recommendations to harden your Istio setup, and steps to implement them in your environment. Gloo Mesh Core analyzes your setup, and returns individual insights that contain information about errors and warnings in your environment, best practices you can use to improve your configuration and security, and more.

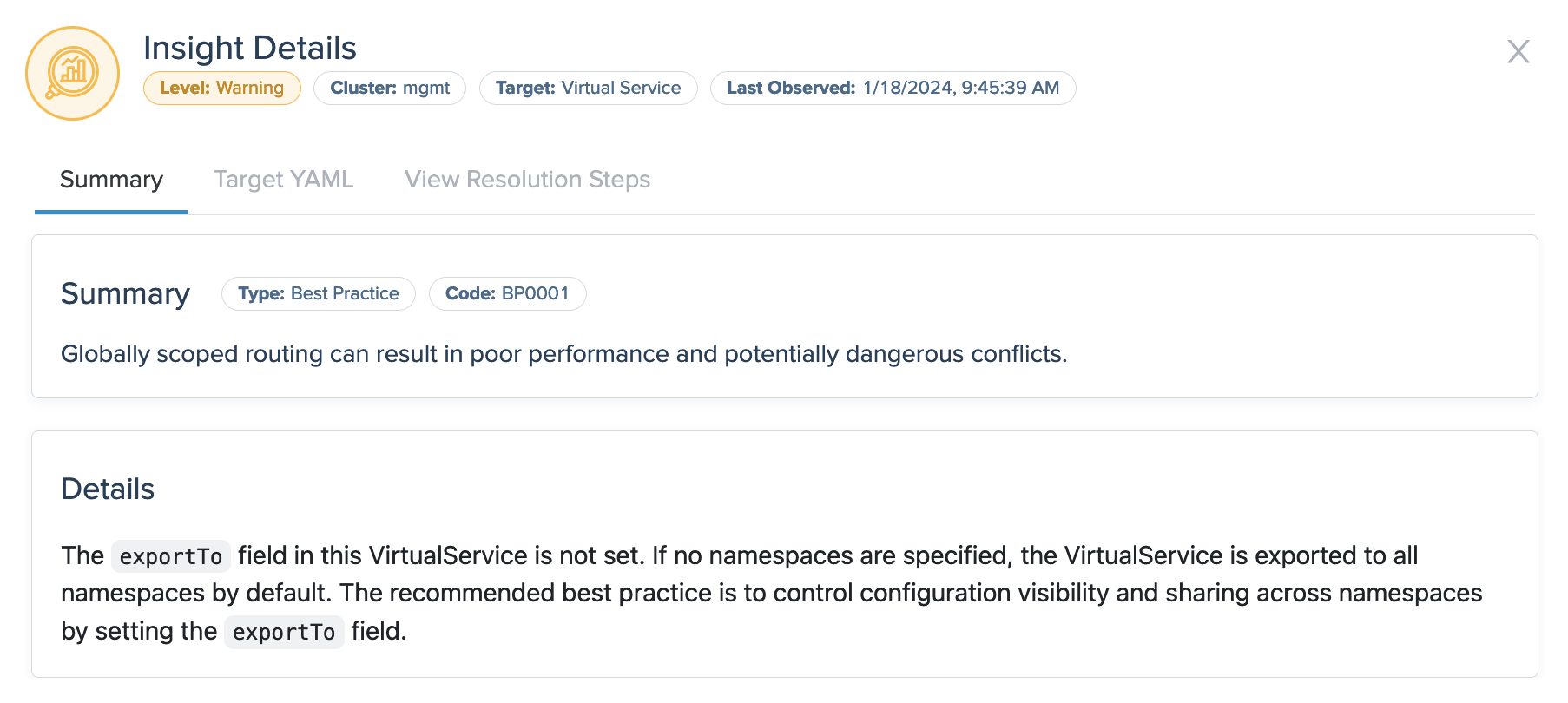

Figure: Insights page On an insight that you want to resolve, click Details. The details modal shows more data about the insight, such as the time when it was last observed in your environment, and if applicable, the extended settings or configuration that the insight applies to.

Figure: Example insight Click the Target YAML tab to see the resource file that the insight references, and click the View Resolution Steps tab to see guidance such as steps for fixing warnings and errors in your resource configuration or recommendations for improving your security and setup.

Next steps

Now that you have Gloo Mesh Core and Istio up and running, check out some of the following resources to learn more about Gloo Mesh Core and expand your service mesh capabilities.

Istio:

- Find out more about hardened Istio

n-4version support built into Solo distributions of Istio. - Check out the Istio docs to configure and deploy Istio routing resources.

- Monitor and observe your Istio environment with Gloo Mesh Core’s built-in telemetry tools.

- When it’s time to upgrade Istio, use Gloo Mesh Core to upgrade managed Istio installations.

For ambient installations, see Upgrade ambient service meshes.

Gloo Mesh Core:

- Customize your Gloo Mesh Core installation with a Helm-based setup.

Help and support:

- Talk to an expert to get advice or build out a proof of concept.

- Join the #gloo-mesh channel in the Solo.io community slack.

- Try out one of the Gloo workshops.

Cleanup

If you no longer need this quick-start Gloo Mesh Core environment, you can follow the steps in the uninstall guide.