Install Portal with analytics

Install the Gloo components required to run the developer portal with analytics.

Set up Portal with monitoring analytics so that you can review usage information about the API products that are exposed in your developer portal. You can review information such as total requests, total users, total services, and error rates.

Monitoring helps you verify that the different components you set up for Portal are running as expected. For example, you can monitor the status of the portal server, portal frontend app, ingress gateway, external auth server, rate limiter, and backing Redis database. Additionally, you can monitor the status of the Gloo custom resources you configured Portal with, including route tables and policies. You can also enable the ingress gateway to log analytics about your portal API products.

About analytics

As an API product owner, you can collect analytics about your API usage. This way, you can identify ways to better monetize your API products based on actual usage. You can enable various Gloo Mesh Gateway features to enable analytics for API products that your portal exposes.

Review the following diagram and description of Portal API Analytics.

When the end user queries one of your APIs, your gateway handles the request. You can enable the gateway to generate access logs. This way, the Open Telemetry (OTel) metrics pipeline that you set up can store the access logs in a Clickhouse storage database. As the Portal admin, you can then view usage information for your API products in the Gloo UI through a Grafana dashboard.

The API analytics you can collect from the access logs include the following:

- Total requests of a consumer: Count of all requests from one user email address.

- Average error rate: Percentage of requests with error status codes.

- Total consumers: Count of all requests from distinct user email addresses.

- Total services: Count of all services that receive traffic from users.

Before you begin

Create or use an existing Kubernetes or OpenShift cluster, and save the cluster name in an environment variable. Note: The cluster name must be alphanumeric with no special characters except a hyphen (-), lowercase, and begin with a letter (not a number).

export CLUSTER_NAME=<cluster_name>Set your Gloo Mesh Gateway license key as an environment variable. If you do not have one, contact an account representative. If you prefer to specify license keys in a secret instead, see Licensing. To check your license’s validity, you can run

meshctl license check --key $(echo ${GLOO_MESH_GATEWAY_LICENSE_KEY} | base64 -w0).export GLOO_MESH_GATEWAY_LICENSE_KEY=<license_key>Set the Gloo Mesh Gateway version. This example uses the latest version. You can find other versions in the Changelog documentation. Append

-fipsfor a FIPS-compliant image, such as2.5.9-fips. Do not includevbefore the version number.export GLOO_VERSION=2.5.9

Set up Portal with analytics

Complete the following steps to set up Gloo Mesh Gateway with the portal server and configure the ingress gateway to collect portal-related analytics.

Step 1: Install Portal

Choose from the following options to install the required Portal components to collect API usage analytics during an initial installation or upgrade of Gloo Mesh Gateway.

Installation option

Set up the required Portal components as part of your initial Gloo Mesh Gateway installation.

Add and update the Helm repository for Gloo Mesh Gateway.

helm repo add gloo-platform https://storage.googleapis.com/gloo-platform/helm-charts helm repo updateApply the Gloo Mesh Gateway CRDs to your cluster by creating a

gloo-platform-crdsHelm release. Note: If you plan to manually deploy and manage your Istio installation in workload clusters rather than using Solo’s Istio lifecycle manager, include the--set installIstioOperator=falseflag to ensure that the Istio operator CRD is not managed by this Gloo CRD Helm release.helm install gloo-platform-crds gloo-platform/gloo-platform-crds \ --namespace=gloo-mesh \ --create-namespace \ --version $GLOO_VERSIONCreate a secret with the password to use to store access logs in Clickhouse. This example setup uses the base64-encoded

passwordfor the value of password. Note that this secret must be in each cluster and namespace where you deploy the Gloo OTel pipeline.cat << EOF | kubectl apply -f - apiVersion: v1 kind: Secret metadata: name: clickhouse-auth namespace: gloo-mesh type: Opaque data: # password = password password: cGFzc3dvcmQ= EOFCreate a Helm values file for your Gloo Mesh Gateway installation.

touch gloo-gateway-single.yaml open gloo-gateway-single.yamlIn the Helm values file, include the components needed for Portal analytics and save the file.

Create another Helm values file for the add-ons that you need.

touch gloo-gateway-addons.yaml open gloo-gateway-addons.yamlIn the Helm values file, include including the external auth service, rate limiter, and portal server, and save the file. The following example also sets up the local Redis instance to be used for backing storage for the servers. For more backing storage options such as to bring your own Redis with auth, see the Backing Redis databases guide.

common: addonNamespace: gloo-mesh extAuthService: enabled: true extAuth: apiKeyStorage: name: redis enabled: true config: host: "redis.gloo-mesh:6379" db: 0 secretKey: "ThisIsSecret" glooPortalServer: enabled: true apiKeyStorage: redis: enabled: true address: redis.gloo-mesh:6379 configPath: /etc/redis-client-config/config.yaml secretKey: "ThisIsSecret" rateLimiter: enabled: trueInstall the Gloo Mesh Gateway management plane and data plane components in your cluster, including the customizations in your Helm values file.

helm install gloo-platform gloo-platform/gloo-platform \ --namespace gloo-mesh \ --version $GLOO_VERSION \ --values gloo-gateway-single.yaml \ --set common.cluster=$CLUSTER_NAME \ --set licensing.glooGatewayLicenseKey=$GLOO_MESH_GATEWAY_LICENSE_KEYInstall the Gloo Mesh Gateway add-ons in a separate Helm release.

Verify that Portal and the related components are installed.

meshctl checkIn the example output, make sure that the portal, external auth, and rate limiting servers and all of the core Gloo Mesh Gateway components are healthy.

🟢 Gloo Mesh Gateway License Status INFO gloo-gateway enterprise license expires on 05 Nov 23 14:18 EST 🟢 CRD Version check 🟢 Gloo deployment status Namespace | Name | Ready | Status gloo-mesh | ext-auth-service | 1/1 | Healthy gloo-mesh | gloo-mesh-agent | 1/1 | Healthy gloo-mesh | gloo-mesh-mgmt-server | 1/1 | Healthy gloo-mesh | gloo-mesh-portal-server | 1/1 | Healthy gloo-mesh | gloo-mesh-redis | 1/1 | Healthy gloo-mesh | gloo-mesh-ui | 1/1 | Healthy gloo-mesh | prometheus-server | 1/1 | Healthy gloo-mesh | rate-limiter | 1/1 | Healthy gloo-mesh | redis | 1/1 | Healthy gloo-mesh | gloo-telemetry-collector-agent | 3/3 | Healthy 🟢 Mgmt server connectivity to workload agents Cluster | Registered | Connected Pod cluster1 | true | gloo-mesh/gloo-mesh-mgmt-server-65bd557b95-v8qq6Verify that the gateway proxy service is created and assigned an external IP address. It might take a few minutes for the load balancer to deploy.

kubectl get svc -n gloo-mesh-gatewaysExample output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE istio-ingressgateway LoadBalancer 10.XX.XXX.XXX 35.XXX.XXX.XXX 15021:30826/TCP,80:31257/TCP,443:30673/TCP,15443:30789/TCP 48s

Now that your Gloo Mesh Gateway components for Portal are installed, set up Grafana.

Upgrade option

Set up the required Portal components by upgrading your existing Gloo Mesh Gateway installation.

Check the Helm releases in your cluster. Depending on your installation method, you either have only a main installation release (such as

gloo-platform), or a main installation and a separate add-ons release (such asgloo-agent-addons), in addition to your CRDs release.helm list --all-namespacesExample of separate releases for platform and add-ons:

NAME NAMESPACE REVISION UPDATED STATUS CHART gloo-agent-addons gloo-mesh 1 2023-09-01 13:29:03.136686 -0400 EDT deployed gloo-platform-2.4.0 gloo-platform gloo-mesh 1 2023-09-01 13:26:56.061102 -0400 EDT deployed gloo-platform-2.4.0 gloo-platform-crds gloo-mesh 1 2023-09-01 13:23:56.061102 -0400 EDT deployed gloo-platform-crds-2.4.0Get your current installation values.

If you have only one release for your installation, get those values. Note that your Helm release might have a different name.

helm get values gloo-platform -n gloo-mesh -o yaml > gloo-gateway-single.yaml open gloo-gateway-single.yamlIf you have a separate add-ons release, get those values.

helm get values gloo-agent-addons -n gloo-mesh -o yaml > gloo-agent-addons.yaml open gloo-agent-addons.yaml

Add or edit the following settings for the required add-ons, including the external auth, rate limiting, and portal servers. The following example also sets up the local Redis instance to be used for backing storage for the servers. For more backing storage options, see the Backing Redis databases guide.

Upgrade your Helm release with the Helm values that you previously prepared.

- If you have only one release for your installation, upgrade the

gloo-platformrelease.helm upgrade gloo-platform gloo-platform/gloo-platform \ --namespace gloo-mesh \ -f gloo-gateway-single.yaml \ --version $GLOO_VERSION - If you have a separate add-ons release, upgrade the

gloo-agent-addonsrelease as well.helm upgrade gloo-agent-addons gloo-platform/gloo-platform \ --namespace gloo-mesh \ -f gloo-agent-addons.yaml \ --version $GLOO_VERSION

- If you have only one release for your installation, upgrade the

Verify that Portal and the related components are installed.

meshctl checkIn the example output, make sure that the portal, external auth, and rate limiting servers and all of the core Gloo Mesh Gateway components are healthy.

🟢 Gloo Mesh Gateway License Status INFO gloo-gateway enterprise license expires on 05 Nov 23 14:18 EST 🟢 CRD Version check 🟢 Gloo deployment status Namespace | Name | Ready | Status gloo-mesh | ext-auth-service | 1/1 | Healthy gloo-mesh | gloo-mesh-agent | 1/1 | Healthy gloo-mesh | gloo-mesh-mgmt-server | 1/1 | Healthy gloo-mesh | gloo-mesh-portal-server | 1/1 | Healthy gloo-mesh | gloo-mesh-redis | 1/1 | Healthy gloo-mesh | gloo-mesh-ui | 1/1 | Healthy gloo-mesh | prometheus-server | 1/1 | Healthy gloo-mesh | rate-limiter | 1/1 | Healthy gloo-mesh | redis | 1/1 | Healthy gloo-mesh | gloo-telemetry-collector-agent | 3/3 | Healthy 🟢 Mgmt server connectivity to workload agents Cluster | Registered | Connected Pod cluster1 | true | gloo-mesh/gloo-mesh-mgmt-server-65bd557b95-v8qq6

Now that your Gloo Mesh Gateway components for Portal are installed, set up Grafana.

Step 2: Set up Grafana to use Clickhouse

You installed or upgraded Gloo Mesh Gateway with the add-ons to run the developer portal and collect access logs for your APIs. Now, you can configure a Grafana instance to pull the data stored in Clickhouse.

- Verify that the Clickhouse resources are healthy.

kubectl get all -A -l app.kubernetes.io/name=clickhouse - Install or upgrade Grafana to use the Clickhouse database, such as with the following commands. Note that the Clickhouse password matches the password of the secret that you previously created.

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts helm repo update helm upgrade --install kube-prometheus-stack \ prometheus-community/kube-prometheus-stack \ --version 30.0.3 \ --namespace monitoring \ --create-namespace \ --set "grafana.additionalDataSources[0].secureJsonData.password=$(kubectl get secret clickhouse-auth -n gloo-mesh -o jsonpath='{.data.password}' | base64 -d)" \ --values - <<EOF grafana: service: type: LoadBalancer plugins: - grafana-clickhouse-datasource additionalDataSources: - name: ClickHouse type: grafana-clickhouse-datasource isDefault: false uid: clickhouse-access-logs jsonData: defaultDatabase: default port: 9000 server: clickhouse.gloo-mesh username: default tlsSkipVerify: true EOF - Verify that the Grafana deployment and the rest of the Prometheus stack is healthy.

kubectl get pods -n monitoring

If Clickhouse or Grafana are not healthy, try the Debugging steps.

Now that your Gloo Mesh Gateway components are set up, you can add the Grafana dashboard for portal usage analytics.

Step 3: Add the portal usage analytics dashboard to Grafana

You can use the sample Gloo Mesh Gateway Portal API analytics dashboard to monitor portal usage.

- Set up port forwarding on your local machine to access the Grafana dashboard.

kubectl port-forward $(kubectl get pods -n monitoring -o name | grep grafana) 8080:3000 -n monitoring - Open the Grafana dashboard in your web browser.

- Log in to the Grafana dashboard with

adminas the username, andprom-operatoras the password. These are the default credentials that are set by the Prometheus community chart. You can change these credentials when you log in to Grafana. - Import the Gloo Mesh Gateway Portal API analytics dashboard.

- Download the JSON file that holds the configuration for the Gloo Mesh Gateway Portal API analytics dashboard.

- From the Grafana menu, select

+> Import. - Click Upload JSON file and select the file for the API analytics dashboard that you downloaded.

- Click Import to open the API analytics dashboard.

Good job! You set up the Grafana dashboard to monitor the API usage of your developer portal.

Step 4: Monitor API usage with the Grafana dashboard

With Portal and the related logging components installed, you can monitor the API usage in developer portal.

- Create your API products.

- Prepare usage plans for your API products.

- Configure a developer portal.

- Generate traffic to your API products, either from your users or such as with the following example request to the Tracks API product .

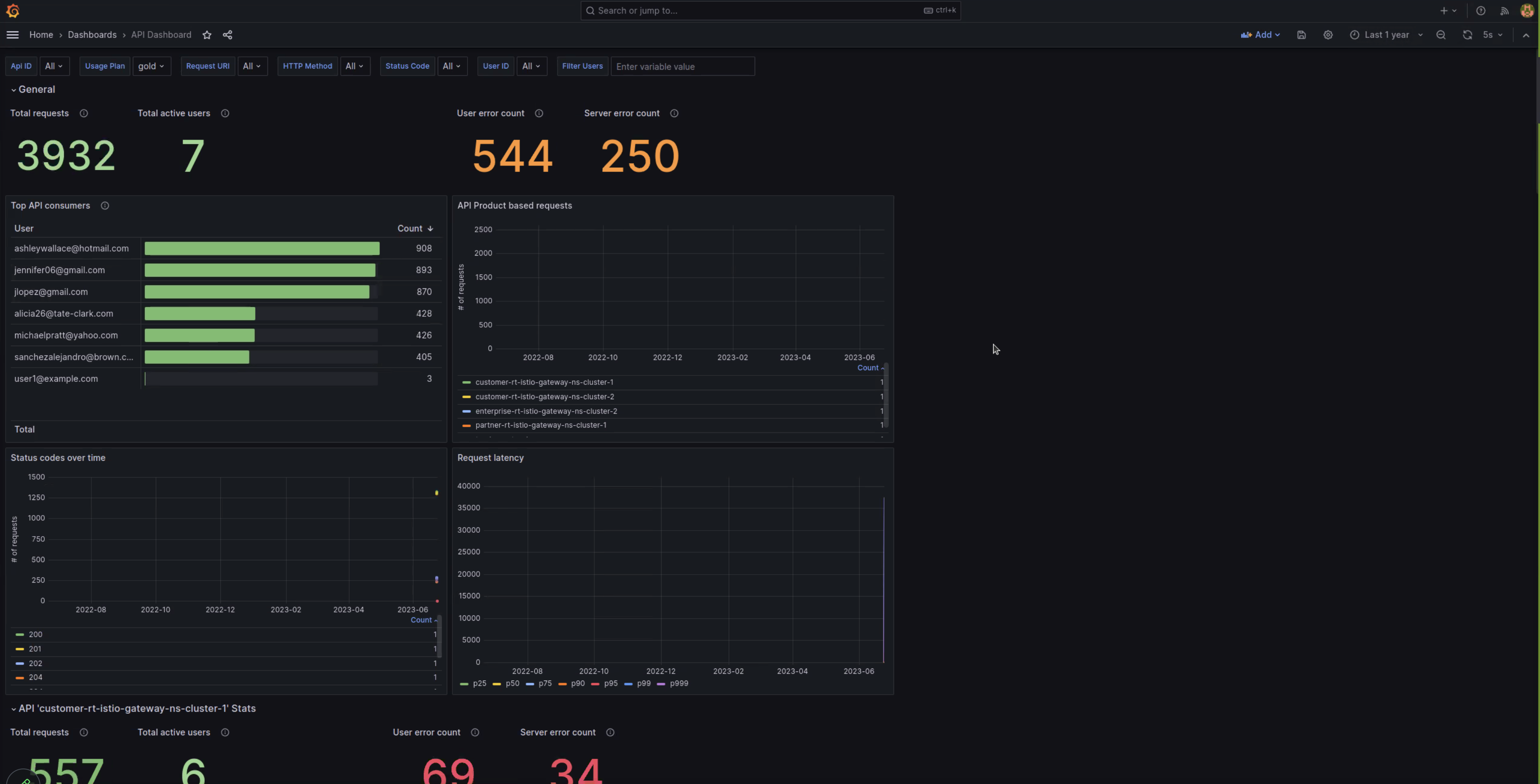

curl -v --resolve api.example.com:80:${INGRESS_GW_IP} http://api.example.com/trackapi/tracks - Explore the API usage analytics, such as in the following example. You can filter by several properties, such as APIs, usage plans, methods, status codes, and more.

Other monitoring tools

To help you monitor your environment, Gloo Mesh Gateway provides several tools as follows:

- Gloo UI, which features tabs to help debug your gateways, APIs, portals, and policies.

- Global status reporting on Gloo custom resources, including whether the resource is accepted.

- Logs for the deployments, such as the portal server or ingress gateway.

- API usage analytics for the API products that are exposed in your developer portal.

- Troubleshooting documentation for Gloo Mesh Gateway, including a debug guide for portal API usage analytics.